Let the UI drive the requirements

Conventional approaches to building software applications start with a Big Idea. Someone needs (to define an application) “a program that gives a computer instructions that provide the user with tools to accomplish a task“.

Someone picks up the big idea and breaks it down into a smaller list of “requirements”, things that the application must do to fulfil the Big Idea. In conventional waterfall development Business Analysts will spend a while documenting the list of requirements in some detail – in agile approaches they will do it as they go in a far more “lightweight” manner. Maybe a technical architect will draw up a blueprint design of the solution. Regardless, soon the developers will start writing code. With either agile or waterfall the user interface usually only starts to emerge at this stage. Until then, everything is coded in words (and maybe some boxes and arrows showing flow). Only late in the process does the UI emerge.

Returning to that definition of an application, IT development seems to spend most of its time in the “a program that gives a computer instructions” world, and often looses sight of “provid[ing] the user with tools to accomplish a task”.

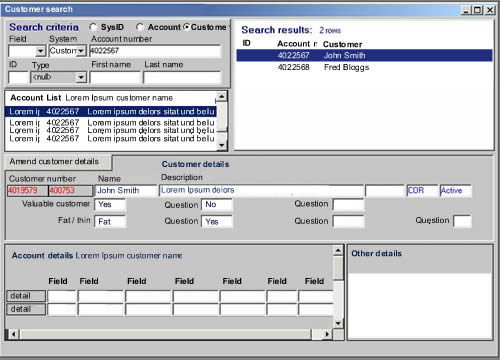

How about a different focus. Start with the user interface. Take the Big Idea and illustrate it. Draw pictures of what the tools will look like, how the user will interact with them. Sketch storyboards, wireframes, lo-fi prototypes, paper mock-ups, call them what you will. Use this as the primary requirements document, supported by explanatory text and detail where we required. These may be stories or use cases, but let the visual representation of the big idea drive the requirements rather than it being almost an afterthought. Start with the UI and specify the functionality from that rather than the other way round. After all, it will be the UI that the user uses to accomplish their task, not the computer instructions.